Generating plants and fauna has been one of the primary successes of computer graphics over the last few decades, most people would be surprised at how often the plants in a film or advert are virtual copies of the real thing.

A simple way to create plants is using Lindenmayer Systems, which take a starting string and over a number of steps re-write the original string based upon simple rules.

So for example we could start with the character A and every time we iterate we replace the letter A with AB, and if we find a B we replace it with letter A. This gives us a series that looks like:

A

AB

ABA

ABAAB

ABAABABA

ABAABABAABAAB

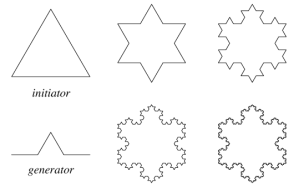

and so on. Visually we could change the characters to directions for an image drawer (things like turn left, turn right, draw forwards) and instantly we’ve produced some nice little snowflakes.

We can generate all sorts of interesting shapes by making tiny additions to those initial rules, such as adding the ability to jump spaces without drawing, changing colour, all in all a very simple way to make some familiar looking patterns.

Where things get more dynamic is if we allow the algorithms to maintain a stack of visited matrices, which means to branch, a bit like trees.

Where things get more dynamic is if we allow the algorithms to maintain a stack of visited matrices, which means to branch, a bit like trees.

Move into the realm of 3D and we begin to get some very lifelike trees. Of course trees are not made up of identical sticks from root to branch, so we need to add in a little stochastic sampling (randomness in the growth) as well as the idea that the tree will grow seasonally, and a trunk will grow differently to a branch, a leaf or a flower, however all of this can be managed with some imagination.

To get an idea of how far this has come, check out the virtual arboretum managed by SpeedTree, a company which specializes in dreaming up virtual fauna. To get some more details of how the magic happens, I’d recommend The Algorithmic Beauty of Plants by Przemyslaw Prusinkiewicz and Aristid Lindenmayer.